Storyflow

Home

Blog

Features

Login

Home

/

Blog

/

Article

How to Run an Ideation Session with AI: A Step-by-Step Guide (2026)

Running an ideation session with AI produces better ideas than running one without it — but only if you structure the session correctly first. This guide covers all 8 steps: from writing your problem frame to documenting the decision and the path not taken.

Category

Creative Process

Author

Sara de Klein

Head of Product at Storyflow

Topics

2026-03-06

•

16 min read

•

Creative ProcessTable of Contents

How do you run an ideation session with AI?

Frame your problem as a How Might We question, run human divergence first (15–20 minutes without AI), then use AI to expand and challenge what your team generated. Cluster ideas by insight, score clusters against defined criteria, and develop your top two directions before choosing. The process takes 75–90 minutes plus 24-hour pre-work. AI improves the session — it does not replace the structured process.

The Problem With How Most People Ideate

The typical approach goes like this: a deadline arrives, someone books a meeting, the team spends an hour on a whiteboard or Miro board, the best-sounding idea goes into the project brief, and six weeks later you are halfway through execution wondering why the concept feels generic. The problem is not the people in the room — it is the process. The good ideas are in there. They never got the conditions to surface.

Adding AI to a broken process makes it faster and more broken. If your problem is undefined, AI will generate confident-sounding variations of the same concept you already have. If your team evaluates ideas as they are generated, AI joining the conversation does not fix the dynamic. The process needs fixing first. The AI comes second.

What You Need Before You Start

A specific problem to solve: Not a goal ('get more views') but a genuine friction point you are trying to address. The more precisely framed, the better the session.

A dedicated 60–90 minute window: Ideation sessions that run longer than 90 minutes produce diminishing returns. Cognitive fatigue reduces idea quality faster than most people expect.

Participants briefed in advance: Send the problem context before the session starts. Cold divergence — where people encounter the problem for the first time in the meeting — produces obvious ideas.

A defined output format: Know what you are leaving with. A single concept direction? A shortlist of three? Ambiguous outputs produce inconclusive sessions.

Your evaluation criteria written before you start: What makes an idea worth pursuing? Feasibility, novelty, alignment with strategy? Write this before divergence, not after.

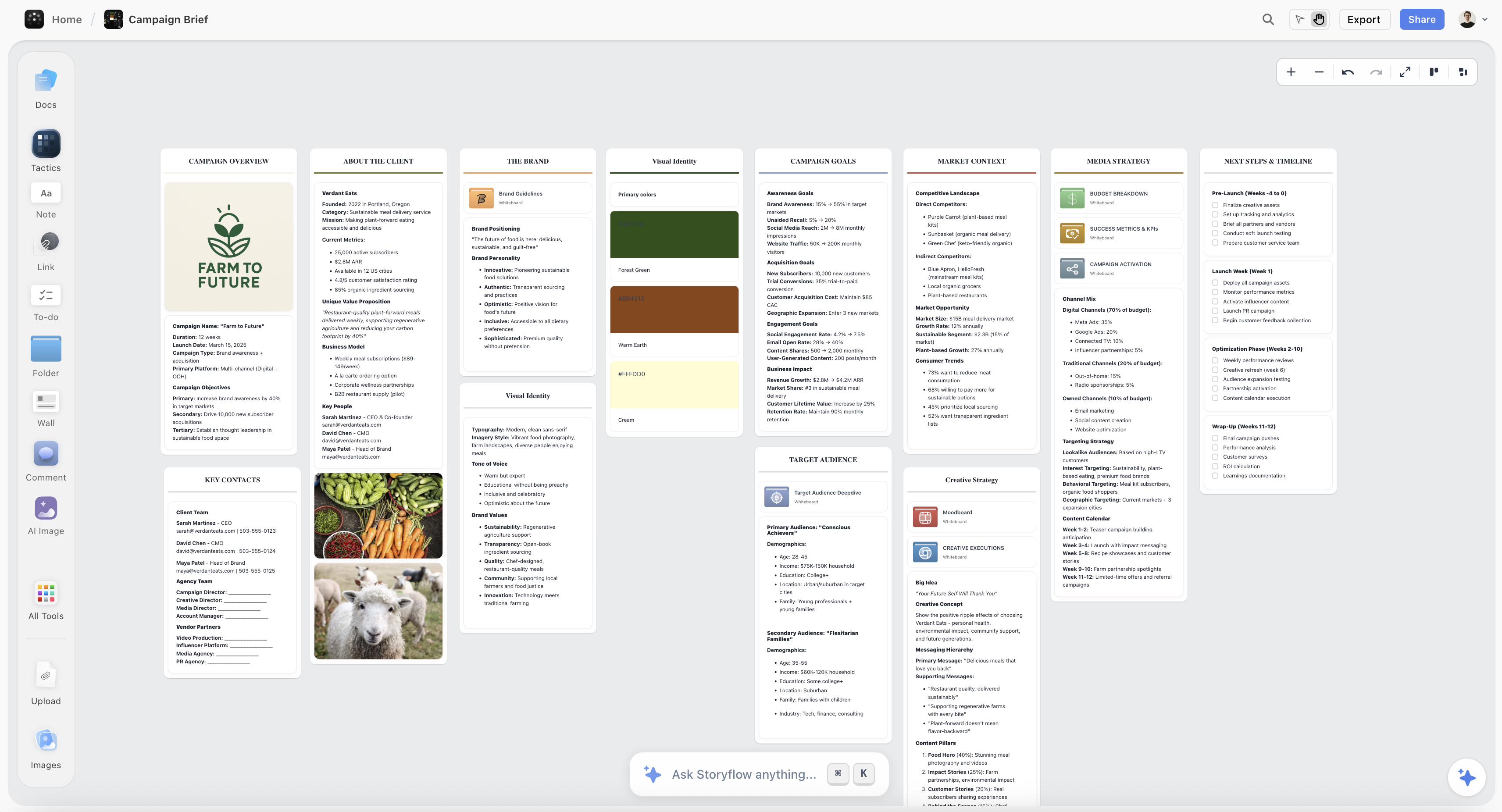

A visual workspace where AI sees everything at once: Storyflow's free tier works for this guide — your problem frame, divergence ideas, and convergence notes all on one canvas that the AI reads before responding.

How to Run an Ideation Session with AI: Step by Step

Step 1: Write Your Problem as a How Might We Question

Output: 2–3 HMW questions, broad to narrow

The single biggest predictor of ideation quality is how precisely the problem is framed before the session starts. A How Might We (HMW) question is the most reliable framing format because the three words each do specific cognitive work: "how" implies a solution exists; "might" removes the pressure of finding the right answer; "we" signals shared ownership.

Most problem statements fail because they describe a goal rather than a problem. "How might we get more sign-ups" is a goal. "How might we help someone understand the value of Storyflow within their first ten minutes?" is a problem. The difference in specificity produces a completely different set of ideas. Write two to three HMW questions at different levels of specificity — broader ones open more creative territory, narrower ones produce more immediately actionable ideas.

Example: A content team developing a new B2B series might write: "HMW help our audience learn a new concept without feeling like they are reading documentation?" Then narrower: "HMW turn our product changelog into content existing users actually want to read?"

Where Storyflow helps: Post your HMW questions at the top of your Storyflow canvas before the session starts. The AI assistant reads them as context when you bring it in later — meaning its suggestions are grounded in your specific problem, not generic prompts.

Common mistake: Writing the HMW question too broadly ('HMW be more creative?') so every idea technically qualifies, which makes convergence impossible.

Step 2: Brief Participants and Collect Pre-Session Ideas

Output: 15–30 ideas submitted before the session

Send your HMW questions to participants 24 hours before the session with one instruction: write down three ideas independently before the meeting. This single step changes everything. When participants arrive with ideas already formed, the session starts with real material rather than the awkward silence of a cold start.

Research from MIT Sloan found that async pre-work before synchronous ideation increases idea diversity by 38%. The reason is anchoring: when one person speaks first in a live session, everyone else's ideas shift toward what was just said. Pre-session solo work removes the anchor entirely.

Ask participants to submit ideas as written sentences, not labels. "Use existing customer interviews as the narrative spine of the series" is workable. "Customer content" is not.

Where Storyflow helps: Have each participant add their pre-session ideas as cards on the shared Storyflow canvas. You arrive at the session with visible, organised material — not blank sticky notes waiting to be filled.

Common mistake: Skipping the pre-session brief because it feels like admin. The cold-start session it prevents takes 20 minutes to warm up and produces fewer unique ideas than the brief itself.

Step 3: Run Your Human Divergence Round First

Output: 15–30 raw ideas, no AI involved

The first 20 minutes of the live session belong to the humans in the room. Do not open AI tools during this phase. The reason is practical: AI suggestions anchor thinking. When an AI produces a confident list early in divergence, people stop generating and start reacting — you lose the associative thinking that produces your best material.

Run one structured technique for 15–20 minutes. With four or fewer participants, use Round-Robin: each person shares one idea per turn, continuing until ideas are exhausted. Working solo, use Crazy Eights — eight ideas in eight minutes on paper, then select three worth developing.

Example: A content team running a B2B series ideation might produce: 'a live session where customers narrate their own use case,' 'a newsletter written from the perspective of a new user's first 30 days,' 'a 5-episode mini-documentary about one customer's business problem.' The documentary format became the final direction in a real project — it never would have survived an early AI session because it sounds too slow before you have the context.

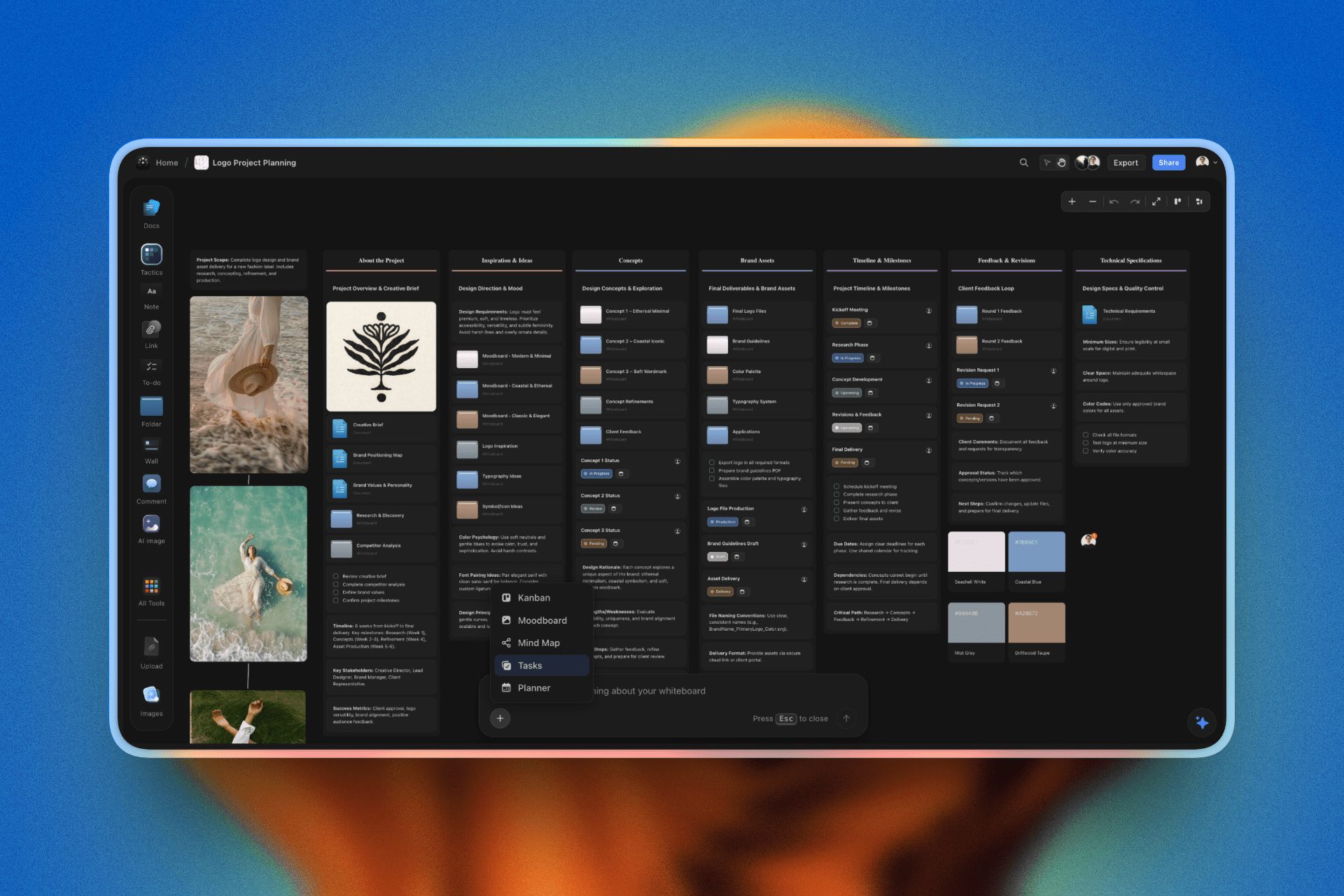

Where Storyflow helps: Storyflow's mind map view is ideal for this phase — you place ideas spatially, which helps you see categories and relationships forming before you have explicitly named them.

Common mistake: Letting evaluation creep into divergence. 'That will never work' kills the ideas that come after it, not just the one being judged. Enforce the no-evaluation rule explicitly at the start.

Step 4: Use AI to Expand and Challenge Your Ideas

Output: Expanded ideas with challenged assumptions

Now bring the AI in. With your divergence output visible on the canvas, ask it to do two things: expand on your three strongest ideas (what versions or variations exist?) and actively challenge your assumptions (what are you taking for granted that might not be true?).

This phase belongs here — after human divergence, not instead of it. At this point the AI has real material to work with. Its pattern-recognition is actually useful: it can find analogies in adjacent industries, surface variations you have not considered, and identify buried assumptions in each idea.

Three prompts that consistently produce strong output: 'For each of my top three ideas, give me three variations that push the concept further.' / 'What is the most common assumption buried in each of these ideas — what happens if we flip it?' / 'Which of these ideas has been done in a different industry? What can we take from how it worked there?'

Where Storyflow helps: In Storyflow, the AI reads your entire canvas before responding — your HMW question, pre-session ideas, and divergence output all in context. This makes its suggestions specific to your actual problem rather than generic brainstorming responses.

Common mistake: Asking the AI to generate ideas from scratch instead of using it to expand what you have already generated. Starting fresh with AI at step 4 wastes the divergence work and produces the same surface-level output as step 1.

Step 5: Cluster Ideas and Find the Patterns

Output: 3–5 named insight clusters

With 20–40 ideas on the canvas, you have too many to evaluate individually. The next step is clustering — grouping ideas by the underlying insight they share, not by surface similarity. This distinction is critical.

Two ideas that look different might share the same core insight: 'write the series from the customer's perspective' and 'use real support tickets as the editorial calendar' both express 'customer language is more valuable than brand language.' They belong to the same cluster.

Example: The content team clustered 24 ideas into: Customer-led narrative (5 ideas), Format experimentation (6 ideas), Distribution channel inversion (7 ideas), and Product as protagonist (6 ideas). The cluster names became the territories they evaluated — not the individual ideas.

Where Storyflow helps: Group cards visually on the Storyflow canvas by cluster. The spatial layout makes pattern recognition much faster than a text list — you can see which clusters have more material and which need more development.

Common mistake: Clustering by topic ('social media ideas,' 'video ideas') instead of by insight. Topic clustering produces redundant evaluation — you already know the format. You need to know the creative direction.

Step 6: Run Convergence Against Your Criteria

Output: Two scored candidate directions

Score each cluster — not individual ideas — against the evaluation criteria you defined before the session. This is where most teams fail: criteria were never written down, so convergence becomes a negotiation between opinions rather than an evaluation against standards.

Three to four criteria is enough. For a content team: (1) Alignment with the HMW question, (2) Feasibility within current resources, (3) Novelty relative to competitors, (4) Potential for audience return. Score each cluster on a 1–3 scale per criterion, sum the scores. The top two clusters are your candidates for development.

This takes 15–20 minutes. If it takes longer, your criteria are too vague or too many people are involved in the scoring. Write criteria before divergence. Use them during convergence. Do not invent them at this stage.

Where Storyflow helps: Storyflow's Tactics system includes guided convergence frameworks that prompt you to apply criteria systematically, which reduces the risk of scoring by consensus rather than criteria.

Common mistake: Evaluating ideas on how exciting they sound in the room rather than how well they address the problem frame. Excitement in a session is not the same as quality of the concept.

Step 7: Develop Your Top Two Directions into Actionable Concepts

Output: Two written concept documents

The output of convergence is two candidate directions. Now develop each one enough to make a real decision. For each: a one-paragraph concept description, three reasons it addresses the HMW question, the main risk or unknown, and the first concrete action needed to test it.

This is where AI becomes most useful for the second time. With a single direction defined, ask: 'What are the three most important things this concept needs to succeed? What would make it fail? What similar concepts exist — what can we learn from how they performed?'

Keep both directions alive through this step. The temptation is to converge prematurely on the option that generated the most energy in the room. Energy in a session is not the same as quality of the concept. More than once, the quieter option has become the stronger brief on paper.

Where Storyflow helps: Storyflow's AI Planner can take your concept description and generate a structured action plan — identifying what needs to happen in what order to move from concept to execution, turning a session output directly into a project starting point.

Common mistake: Developing only one direction and calling it done. Comparing two developed concepts almost always surfaces strengths and weaknesses you would have missed with only one option on the table.

Step 8: Document the Decision and the Path Not Taken

Output: Session summary: decision, rationale, and paths not taken

The final deliverable of an ideation session is not the winning idea — it is the documented record of what was explored, what was selected, why, and what was set aside. This documentation becomes essential when the selected direction encounters problems, or when a new team member joins mid-project and needs to understand the creative logic.

Write a one-page session summary: your HMW question, the clusters that emerged, the criteria used, the scores, the selected direction, and one sentence on why each rejected cluster was set aside. This takes 20 minutes and prevents the dynamic of re-running an entire ideation session six weeks later because no one remembers what was already explored.

80% of plans that fall apart by week four do so because the decision rationale was never written down — the team is fighting a phantom, not the actual project challenge.

Where Storyflow helps: Your Storyflow canvas already contains the entire session — the pre-session ideas, divergence output, clusters, and criteria scores. Add a 'Session Summary' card at the top with the final decision and reasoning, and the record is complete and searchable for the life of the project.

Common mistake: Ending the session with a verbal agreement on direction but no written documentation. Two weeks later, three people remember the decision differently, and the brief reflects whoever wrote it most confidently rather than what the team actually decided.

Mind map view — cluster divergence ideas spatially to find patterns before naming them

.png)

After convergence: move selected directions directly into execution stages without leaving the canvas

The 8-Step Process at a Glance

- Frame the problem — Write 2–3 HMW questions before anything else

- Brief participants — Send HMW questions 24 hours before; collect pre-session ideas

- Diverge as humans first — 15–20 minutes of structured generation before any AI

- Bring AI in to expand and challenge — Use AI on your material, not instead of it

- Cluster by insight — Group ideas by the underlying approach, not the surface topic

- Converge against written criteria — Score clusters, not individual ideas

- Develop top two directions — Write a full concept document for each before deciding

- Document the decision — Record what was chosen, why, and what was set aside

Tips and Best Practices

Set the criteria before the session, not during convergence

The most common reason good ideas get rejected is that evaluation criteria shift mid-session to accommodate the idea the loudest voice prefers. If you define criteria before divergence starts, convergence becomes analytical. If you define them during convergence, they become rationalisation. This is the difference between a session that produces a brief and one that produces a political outcome.

Keep the problem frame visible throughout the entire session

Print it or pin it at the top of the canvas. Every time a new idea surfaces, the test is: does this address the How Might We question? I have run sessions where the most exciting idea was a complete non-answer to the actual problem — and because it was exciting, it almost went into the brief unchallenged. Having the HMW visible prevents this.

Give AI your constraints, not just your problem

AI suggestions improve significantly when you include constraints: budget, timeline, team size, channel, audience. 'Give me ideas for a B2B content series, 10-person team, four-week launch window, targeting mid-market SaaS companies' produces better output than 'give me content series ideas.' In Storyflow, these constraints live on the canvas permanently — the AI reads them every time you ask a question.

Use async pre-work for remote sessions

Remote sessions that start cold produce weak first rounds because people are context-switching into the problem in real time. A 24-hour async brief period where each participant generates ideas independently before the live session produces a dramatically better starting point. This takes 15 minutes per participant and saves 30 minutes of awkward warm-up in the actual session.

Time-box divergence ruthlessly

The most common mistake in ideation facilitation is letting divergence run long because the energy is good. After 25 minutes, you are generating redundant variations, not new ideas. Cut it at 20 minutes. The best ideas are in the first 15. The next 30 minutes of an overlong divergence session produce almost nothing that was not already in the room.

Common Mistakes to Avoid

Mistake: Opening an AI chat tool first and asking it to generate ideas

Why it happens: It feels productive — you immediately have content on the page.

What goes wrong: A list of plausible-sounding ideas that anchor everyone else's thinking for the rest of the session, eliminating genuinely unusual ideas before they have a chance to surface.

What to do instead: Run human divergence for 15–20 minutes first, then bring AI in to expand what you have already generated.

Mistake: Skipping the problem framing step

Why it happens: Teams jump to ideation because framing feels like admin and ideation feels like work.

What goes wrong: A session full of ideas that answer a different question than the one you actually needed to solve.

What to do instead: Write the HMW question before you open the calendar invite — it determines the quality of everything that follows.

Mistake: Evaluating ideas during divergence

Why it happens: "That will never work" is said without thinking, usually by the most senior person in the room.

What goes wrong: Unusual ideas self-censor immediately — the ideas that follow avoid the same reaction, producing a homogeneous output.

What to do instead: Enforce the rule explicitly at the start: divergence has no evaluation. Label any evaluation attempt and move on.

Mistake: Converging to the most popular idea

Why it happens: Consensus feels like alignment.

What goes wrong: The most popular idea in a group setting is usually the most familiar one — social pressure and anchoring push groups toward safety, not quality.

What to do instead: Use written criteria and individual scoring before group discussion. Discuss the scores, not the ideas.

Mistake: Ending the session without documentation

Why it happens: The session ends with energy and apparent clarity, and nobody wants to slow it down.

What goes wrong: Six weeks later, three people have different memories of what was decided, and the brief reflects whoever made the clearest notes in their personal notebook.

What to do instead: Spend 20 minutes at the end of every session writing the one-page summary. It is the most valuable 20 minutes of the entire process.

A complete ideation session in Storyflow — HMW question, divergence cards, clusters, and convergence criteria all on one canvas the AI can read

FAQ: How to Run an Ideation Session with AI

How long does it take to run an ideation session with AI?

A complete ideation session takes 75–90 minutes for the live portion, plus a 24-hour async pre-work phase. Preparation — writing the HMW question and sending the brief — takes 20–30 minutes. Documentation takes another 20 minutes after the session. Total investment is around 2.5 hours spread across two days. Teams that skip pre-work and documentation typically spend the same time — just in repeated meetings and re-alignment conversations later.

How is running an ideation session with AI different from traditional brainstorming?

Traditional brainstorming runs divergence and convergence simultaneously, which produces anchoring and groupthink. This AI-assisted process separates those phases explicitly — human divergence runs first, then AI expands and challenges what was generated rather than replacing the generation step. The result is more novel ideas grounded in the actual problem, rather than either pure human opinion or AI suggestions disconnected from team context.

Can I run this process solo, or does it require a team?

You can run every step solo. In solo sessions, use Crazy Eights for divergence — 8 ideas in 8 minutes on paper — rather than Round-Robin, and use the AI more actively in the expansion step since you do not have other humans challenging your assumptions. Budget 60 minutes rather than 90 for a solo session. Solo sessions converge faster because there is no social negotiation, but you lose the diverse perspective a multi-person session provides.

How many people should be in an ideation session?

Three to five people is the research-supported sweet spot. Below three, you lose perspective diversity. Above seven, equal contribution becomes difficult and anchoring effects increase. If your stakeholder group is larger, run parallel sessions of three to five people each with the same HMW question, then merge the clusters in convergence. This produces better output than a ten-person session and takes the same total time.

Can I do this with ChatGPT instead of Storyflow?

You can run the AI expansion steps with ChatGPT — it handles idea expansion and assumption-challenging prompts well. The limitation is context persistence: ChatGPT does not retain your HMW question, divergence output, and criteria in one place where it can read them simultaneously. You end up copy-pasting context into each prompt manually. Storyflow reads your entire canvas before responding, so later-session prompts are informed by everything generated earlier in the same session.

How do I know if my HMW question is good enough?

A well-formed HMW question passes three tests: specific enough that a completely wrong answer is obviously wrong, open enough that multiple solution types are valid, and describes a problem rather than a solution. "HMW make our onboarding faster" fails the third test — it presupposes speed as the solution. "HMW help new users feel competent in their first session" passes all three. If you can immediately tell whether any given idea addresses the question, it is specific enough.

What if the team keeps generating the same type of idea?

This is category fixation — the team has mentally settled on one solution type and keeps varying it. The fix is a constraint technique: impose an artificial limitation that makes the current solution category impossible. "Solve this with no video content" forces a team stuck on video ideas to explore other formats. "Solve this with no budget" forces a team stuck on paid solutions to explore organic approaches. Storyflow's Tactics system includes constraint prompts designed for this scenario.

What do I do with ideas that were not selected?

Document them in the session summary under 'Explored but not selected' with one sentence on why each was set aside. This documentation has practical value: when the selected direction encounters obstacles, rejected ideas become the first place to look for pivots. Several of the most interesting project pivots I have seen came from an idea rejected in session one for being too ambitious, then revisited three weeks later when the initial direction ran into a constraint that made the originally 'too ambitious' option suddenly viable.

How do I handle a session where one person dominates?

Use Round-Robin structure and enforce it explicitly: one idea per person per turn, no evaluation during divergence, no one speaks twice in a row. The facilitator's job is to protect air time, not manage content. For remote sessions, use async pre-work — when ideas are submitted in writing before the session, no one has the advantage of speaking first, and individual idea volume becomes visible to everyone, which naturally balances contribution.

Start Your First AI-Assisted Ideation Session Today

The thing that stops most people from running better ideation sessions is not skill or access to tools — it is the sense that the current approach works well enough. It does not. It produces familiar results at a familiar pace, which is easy to mistake for a functioning process. The sessions that produce genuinely novel directions feel different from the first five minutes: the problem frame is specific, divergence is uncomfortable because the obvious ideas run out faster than expected, and the AI is doing something useful rather than generating a list you could have written yourself.

Open Storyflow, create a new canvas, and write your How Might We question at the top. The free tier gives you everything you need to run this full process. Storyflow's Ideation Tactic walks you through the divergence and convergence phases with structured prompts — you do not need to hold the process in your head while also facilitating the session. The first time takes 90 minutes. With a template and a team that has done it once, it takes 60.

Every consistently original creative or strategic team you admire has a reliable process for this — not exceptional talent, a reliable process. Learning to run this session is how you stop depending on when inspiration arrives and start producing it on schedule.

Related Reading

The concept guide that explains the mechanisms behind each phase of this process, and why the structure produces better ideas than unstructured sessions.

A full comparison of the tools available for the divergence phase, if you want to explore alternatives to the workflow described above.

The natural next step after ideation — turning clusters into connected visual structures that inform a project brief.

Applies this ideation process specifically to narrative development for filmmakers, series creators, and documentary makers.

Compares the visual canvas tools that support the spatial clustering and convergence phases of this process.

See Storyflow in Action

A visual AI workspace where every feature lives inside one canvas — no tab-switching, no context lost.

Build your entire board from a single message

Type what you need in the AI chat at the bottom of your canvas. The AI adds cards, headings, and structure directly onto your board.

Use expert frameworks as AI context

Type @ in the AI chat and choose any Tactic. The AI tailors every response to that framework instead of giving generic advice.

Turn your board into a mind map in seconds

Ask the AI to restructure your canvas as a mindmap. It connects your ideas into a visual hierarchy so you can see how everything relates.

Why Storyflow Exists

Storyflow actually began as a personal tool while working on creative and research projects.

We kept running into the same problem: ideas were scattered everywhere — notes, documents, whiteboards.

Nothing helped us see how everything connected.

So we started building a workspace designed around how ideas actually grow.

→ Read how Storyflow was created

Sara de Klein

Head of Product at Storyflow

Published: 2026-03-06

Start creating with AI and become more productive

Transform your creative workflow with AI-powered tools. Generate ideas, create content, and boost your productivity in minutes instead of hours.

Ask Storyflow to

Not sure where to start? Try frameworks used and created by experts: